Windows Server 2012 supports the concept of converged networking, where different types of network traffic share the same Ethernet network infrastructure. In previous versions of Windows Server, the typical recommendation for a failover cluster was to dedicate separate physical network adapters to different traffic types

Overview of different network traffic types

When you deploy a Hyper-V cluster, you must plan for several types of network traffic. The following table summarizes the different traffic types.

| Network Traffic Type | Description |

|

Management

Network

|

Used between the operating system of the physical Hyper-V host (also known as the management operating system) and basic infrastructure functionality such as Active Directory Domain Services (AD DS)

Used to manage the Hyper-V management operating system and virtual machines. Settings (Enable Both following) |

| Cluster Network | Used for inter-node cluster communication such as the cluster heartbeat.

Used for Cluster Shared Volumes (CSV) redirection. Settings

Allow cluster network communication on this network Note: Clear the Allow clients to connect through this network check box.

|

|

Live migration

Network

|

Used for virtual machine live migration.

Configure this network as Cluster communications only network. By default, Cluster will automatically choose the NIC for Live migration. Recommend that you use a dedicated network or VLAN for live migration traffic to ensure quality of service and for traffic isolation and security. Settings

Allow cluster network communication on this network Note: Clear the Allow clients to connect through this network check box.

|

|

Storage(iSCSI)

Network

|

Used for SMB traffic or for iSCSI traffic.

Disabled Cluster communications so that the network is dedicated to only storage related traffic Settings

Do not allow cluster network communication on this network

|

| Replica traffic | Used for virtual machine replication through the Hyper-V Replica feature.

It is not possible to dedicate the network for Hyper-v replication like live migration in 2012 & 2012R2 versions. Uses available network interfaces to transmit replication traffic. Settings( Enable Both following): |

| CSV Network | When you enable Cluster Shared Volumes(CSV), the failover cluster automatically chooses the network that appears to be the best for CSV communication. However, you can designate the network by using the cluster network property, Metric. The lowest Metric value designates the network for CSV and internal cluster communication

The Network which you are configuring for CSV must be enabled for “Cluster Communications” in Failover Console Snap in ->Networks |

| Virtual machine access | Used for virtual machine connectivity.

Typically requires external network connectivity to service client requests. |

Failover Cluster Networks

The Failover Clustering network driver detects networks and networks are automatically created for all logical subnets connected to all nodes in the Cluster. Each network adapter card connected to a common subnet will be listed in Failover Cluster Manager under Networks section.

It is not recommended to assign more than one network adapter per subnet, including IPV6 Link local, as only one card would be used by Cluster and the other ignored.

The Network roles are automatically configured during cluster creation. The below table describes the networks that are configured in a cluster.

To explain above, In Failover console under Network section-> Networks will be grouped based on subnet’s and not based on Network adapters.

| Name | Value | Description |

| Disabled (DO NOT ALLOW) for Cluster Communication | 0 |

|

| Enabled for Cluster Communication only | 1 |

|

| Enabled for client and cluster communication | 3 |

|

Though the cluster networks are automatically configured while creating the cluster as described above, they can also be manually configured based on the requirements in the environment.

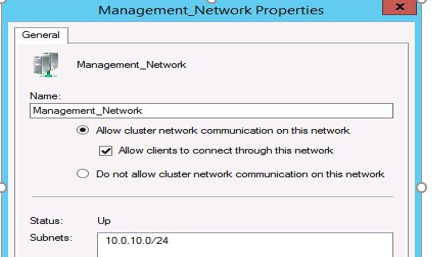

To modify the network settings for a Failover Cluster-> Networks-> Go too Network Properties>Change option

Network Binding Order (Best Practice):

The Adapters and Bindings tab lists the connections in the order in which the connections are accessed by network services. The order of these connections reflects the order in which generic TCP/IP calls/packets are sent on to the wire.

How to change the binding order of network adapters

- Click Start, click Run, type ncpa.cpl, and then click OK. You can see the available connections in the LAN and High-Speed Internet section of the Network Connections window.

- Press the <ALT><N> keys on the keyboard to bring up the Advanced Menu.

- On the Advanced menu, click Advanced Settings, and then click the Adapters and Bindings tab.

- In the Connections area, select the connection that you want to move higher in the list. Use the arrow buttons to move the connection. As a general rule, the card that talks to the network (domain connectivity, routing to other networks, etc should the first bound (top of the list) card.

Cluster nodes are multi-homed systems. Network priority affects DNS Client for outbound network connectivity. Network adapters used for client communication should be at the top in the binding order.

Non-routed networks can be placed at lower priority. In Windows Server 2012/2012R2, the Cluster Network Driver (NETFT.SYS) adapter is automatically placed at the bottom in the binding order list.

Multi-Subnet Clusters:

Failover Clustering supports having nodes reside in different IP Subnets. Cluster Shared Volumes (CSV) in Windows Server 2012 as well as SQL Server 2012 support multi-subnet Clusters.

Typically, the general rule has been to have one network per role it will provide. Cluster networks would be configured with the following in mind.

Management traffic

A management network provides connectivity between the operating system of the physical Hyper-V host (also known as the management operating system) and basic infrastructure functionality such as Active Directory Domain Services (AD DS), Domain Name System (DNS), and Windows Server Update Services (WSUS). It is also used for management of the server that is running Hyper-V and the virtual machines.

The management network must have connectivity between all required infrastructure, and to any location from which you want to manage the server.

Isolate traffic on the management network

We recommend that you use firewall or IPsec encryption, or both, to isolate management traffic. In addition, you can use auditing to ensure that only defined and allowed communication is transmitted through the management network.

Cluster Network traffic

A failover cluster monitors and communicates the cluster state between all members of the cluster. This communication is very important to maintain cluster health. If a cluster node does not communicate a regular health check (known as the cluster heartbeat), the cluster considers the node down and removes the node from cluster membership. The cluster then transfers the workload to another cluster node.

Inter-node cluster communication also includes traffic that is associated with CSV. For CSV, where all nodes of a cluster can access shared block-level storage simultaneously, the nodes in the cluster must communicate to arrange storage-related activities.

Also, if a cluster node loses its direct connection to the underlying CSV storage, CSV has resiliency features which redirect the storage I/O over the network to another cluster node that can access the storage. ->All these activities can be carried over Cluster Network Traffic

Isolate traffic on the cluster network

To isolate inter-node cluster traffic, you can configure a network to either allow cluster network communication or not to allow cluster network communication. For a network that allows cluster network communication, you can also configure whether to allow clients to connect through the network. (This includes client and management operating system access.)

A failover cluster can use any network that allows cluster network communication for cluster monitoring, state communication, and for CSV-related communication.

To configure a network to allow or not to allow cluster network communication, you can use Failover Cluster Manager or Windows PowerShell. To use Failover Cluster Manager, click Networks in the navigation tree. In the Networks pane, right-click a network, and then click Properties.

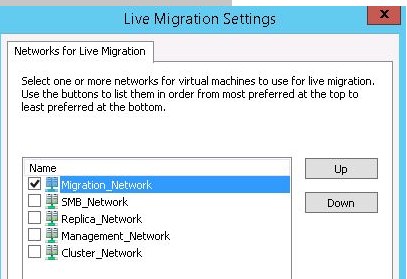

Live migration traffic

As with the CSV network, you would want this network if using as a Hyper-V Cluster and highly available virtual machines. The Live Migration network is used for live migrating Virtual machines between cluster nodes. Configure this network as Cluster communications only network. By default, Cluster will automatically choose the NIC for Live migration.

We recommend that you use a dedicated network or VLAN for live migration traffic to ensure quality of service and for traffic isolation and security. Live migration traffic can saturate network links.

You can designate multiple networks as live migration networks in a prioritized list. For example, you may have one migration network for cluster nodes in the same cluster that is fast (10 GB), and a second migration network for cross-cluster migrations that is slower (1 GB).

Isolate traffic on the live migration network

By default, live migration traffic uses the cluster network topology to discover available networks and to establish priority. However, you can manually configure live migration preferences to isolate live migration traffic to only the networks that you define. To do this, you can use Failover Cluster Manager or Windows PowerShell. To use Failover Cluster Manager, in the navigation tree, right-click Networks, and then click Live Migration Settings.

Storage (iSCSI) traffic:

If you are using ISCSI Storage and using the network to get to it, it is recommended that the iSCSI Storage fabric have a dedicated and isolated network. This network should be disabled for Cluster communications so that the network is dedicated to only storage related traffic.

This prevents intra-cluster communication as well as CSV traffic from flowing over same network. During the creation of the Cluster, ISCSI traffic will be detected and the network will be disabled from Cluster use. This network should set to lowest in the binding order.

As with all storage networks, you should configure multiple cards to allow the redundancy with MPIO. Using the Microsoft provided in-box teaming drivers, network card teaming is now supported in Win2012 with iSCSI.

For a virtual machine to be highly available, all members of the Hyper-V cluster must be able to access the virtual machine state. This includes the configuration state and the virtual hard disks.

To meet this requirement, you must have shared storage.

In Windows Server 2012, there are two ways that you can provide shared storage:

- Shared block storage. Shared block storage options include Fibre Channel, Fibre Channel over Ethernet (FCoE), iSCSI, and shared Serial Attached SCSI (SAS).

- File-based storage. Remote file-based storage is provided through SMB 3.0.

SMB 3.0 includes new functionality known as SMB Multichannel. SMB Multichannel automatically detects and uses multiple network interfaces to deliver high performance and highly reliable storage connectivity.

By default, SMB Multichannel is enabled, and requires no additional configuration. You should use at least two network adapters of the same type and speed so that SMB Multichannel is in effect. Network adapters that support RDMA (Remote Direct Memory Access) are recommended but not required.

Both iSCSI and SMB use the network to connect the storage to cluster members. Because reliable storage connectivity and performance is very important for Hyper-V virtual machines, we recommend that you use multiple networks (physical or logical) to ensure that these requirements are achieved.

Isolate traffic on the storage network

To isolate SMB storage traffic, you can use Windows PowerShell to set SMB Multichannel constraints. SMB Multichannel constraints restrict SMB communication between a given file server and the Hyper-V host to one or more defined network interfaces.

To isolate iSCSI traffic, configure the iSCSI target with interfaces on a dedicated network (logical or physical). Use the corresponding interfaces on the cluster nodes when you configure the iSCSI initiator.

Replica traffic

Hyper-V Replica provides asynchronous replication of Hyper-V virtual machines between two hosting servers or Hyper-V clusters. Replica traffic occurs between the primary and Replica sites.

Hyper-V Replica automatically discovers and uses available network interfaces to transmit replication traffic. It is not possible to dedicate the network for Hyper-v replication like live migration as on today.

To throttle and control the replica traffic bandwidth, you can define QoS policies with minimum bandwidth weight.

If you use certificate-based authentication, Hyper-V Replica encrypts the traffic. If you use Kerberos-based authentication, traffic is not encrypted.

Isolate traffic for replication

To isolate Hyper-V Replica traffic, we recommend that you use a different subnet for the primary and Replica sites.

If you want to isolate the replica traffic to a particular network adapter, you can define a persistent static route which redirects the network traffic to the defined network adapter. To specify a static route, use the following command:

route add <destination> mask <subnet mask and gateway> if <interface> -p

For example, to add a static route to the 10.1.17.0 network (example network of the Replica site) that uses a subnet mask of 255.255.255.0, a gateway of 10.0.17.1 (example IP address of the primary site), where the interface number for the adapter that you want to dedicate to replica traffic is 8, run the following command:

route add 10.1.17.1 mask 255.255.255.0 10.0.17.1 if 8 -p

Virtual machine access traffic

Most virtual machines require some form of network or Internet connectivity. For example, workloads that are running on virtual machines typically require external network connectivity to service client requests. This can include tenant access in a hosted cloud implementation. Because multiple subclasses of traffic may exist, such as traffic that is internal to the datacenter and traffic that is external (for example to a computer outside the datacenter or to the Internet); one or more networks are required for these virtual machines to communicate.

To separate virtual machine traffic from the management operating system, we recommend that you use VLANs which are not exposed to the management operating system.

Designating a Preferred Network for Cluster Shared Volumes Communication

In most cases, Microsoft recommend that you allow your failover cluster to automatically choose the network for Cluster Shared Volumes (CSV) communication. However, you can designate one or more preferred networks for CSV.

When you enable Cluster Shared Volumes, the failover cluster automatically chooses the network that appears to be the best for CSV communication. However, you can designate the network by using the cluster network property, Metric. The lowest Metric value designates the network for CSV and internal cluster communication. The second lowest value designates the network for live migration, if live migration is used (you can also designate the network for live migration by using the failover cluster snap-in).

Another property, called AutoMetric, uses true and false values to track whether Metric is being controlled automatically by the cluster or has been manually defined.

When the cluster sets the Metric value automatically, it uses increments of 100. For networks that do not have a default gateway setting (private networks), it sets the value to 1000 or greater. For networks that have a default gateway setting, it sets the value to 10000 or greater. Therefore, for your preferred CSV network, choose a value lower than 1000, and give it the lowest metric value of all your networks.

To designate a network for Cluster Shared Volumes

- On a node in the cluster, click Start, click Administrative Tools, and then click Windows PowerShell Modules. (If the User Account Control dialog box appears, confirm that the action it displays is what you want, and then click Yes.)

- To identify the networks used by a failover cluster and the properties of each network, type the following:

Get-ClusterNetwork | ft Name, Metric, AutoMetric, Role

A table of cluster networks and their properties appears (ft is the alias for the Format-Table cmdlet). For the Role property, 1 represents a private cluster network and 3 represents a mixed cluster network (public plus private).

- To change the Metric setting to 900 for the network named Cluster Network 1, type the following:

( Get-ClusterNetwork “Cluster Network 1” ).Metric = 900

| Note |

| The AutoMetric setting changes from True to False after you manually change the Metric setting. This tells you that the cluster is not automatically assigning a Metric setting. If you want the cluster to start automatically assigning the Metric setting again for the network named Cluster Network 1, type the following:

( Get-ClusterNetwork “Cluster Network 1” ).AutoMetric = $true |

- To review the network properties, repeat step 2.

Ref: https://technet.microsoft.com/en-us/library/ff182335(WS.10).aspx

CSV Network for Storage I/O Redirection.

In the case of CSV I/O redirection, latency on this network can slow down the storage I/O performance. Quality of Service is important for this network. In case of failure in a storage path between any nodes or the storage, all I/O will be redirected over the network to a node that still has the connectivity for it to commit the data. All I/O is forwarded, via SMB, over the network which is why network bandwidth is important.

Client for Microsoft Networks and File and Printer Sharing for Microsoft Networks need to be enabled to support Server Message Block (SMB) which is required for CSV. Configuring this network not to register with DNS is recommended as it will not use any name resolution. The CSV Network will use NTLM Authentication for its connectivity between the nodes.

CSV communication will take advantage of the SMB 3.0 features such as SMB multi-channel and SMB Direct to allow streaming of traffic across multiple networks to deliver improved I/O performance for its I/O redirection.

The Network which you are configuring for CSV must be enabled for Cluster Communications in Failover Console Snap in ->Networks

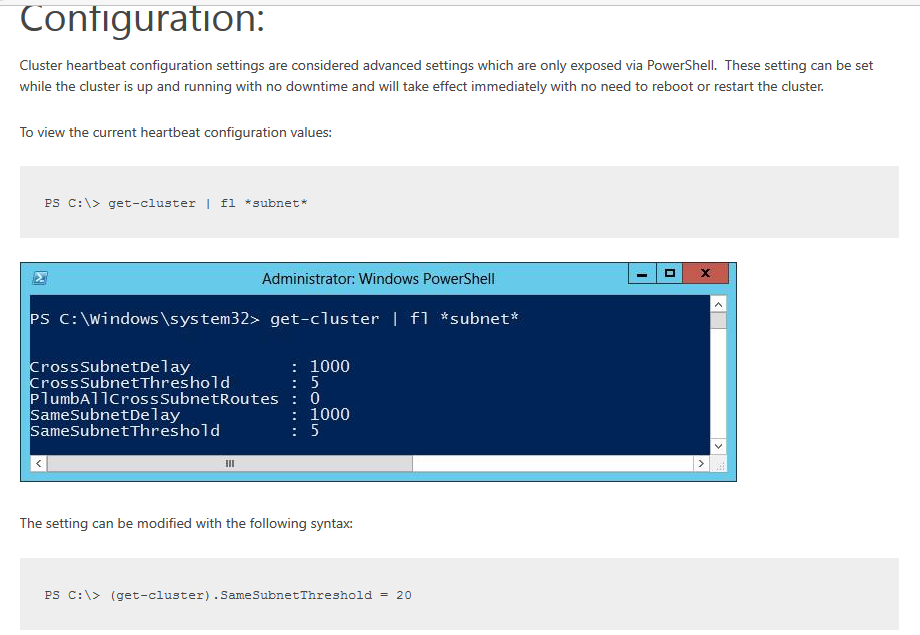

Heartbeat communication and Intra-Cluster communication

Heartbeat communication is used for the Health monitoring between the nodes to detect node failures. Heartbeat packets are Lightweight (134 bytes) in nature and sensitive to latency. If the cluster heartbeats are delayed by a Saturated NIC, blocked due to firewalls, etc, it could cause the cluster node to be removed from Cluster membership.

Intra-Cluster communication is executed to update the cluster database across all the nodes any cluster state changes. Clustering is a distributed synchronous system. Latency in this network could slow down cluster state changes.

IPv6 is the preferred network as it is more reliable and faster than IPv4. IPv6 linklocal (fe80) works for this network.

In Windows Clusters, Heartbeat thresholds are increased as a default for Hyper-V Clusters.

The default value changes when the first VM is clustered.

| Cluster Property | Default | Hyper-V Default |

| SameSubnetThreshold | 5 | 10 |

| CrossSubnetThreshold | 5 | 20 |

Generally, heartbeat thresholds are modified after the Cluster creation. If there is a requirement to increase the threshold values, this can be done in production times and will take effect immediately.

Tuning Failover Cluster Network Thresholds

Windows Server Failover Clustering is a high availability platform that is constantly monitoring the network connections and health of the nodes in a cluster. If a node is not reachable over the network, then recovery action is taken to recover and bring applications and services online on another node in the cluster

Settings

There are four primary settings that affect cluster heart beating and health detection between nodes.

- Delay – This defines the frequency(time) at which cluster heartbeats are sent between nodes. The delay is the number of seconds before the next heartbeat is sent. Within the same cluster there can be different delays between nodes on the same subnet, between nodes which are on different subnets, and in Windows Server 2016 between nodes in different fault domain sites.

- Threshold – This defines the number of heartbeats which are missed before the cluster takes recovery action. The threshold is a number of heartbeats. Within the same cluster there can be different thresholds between nodes on the same subnet, between nodes which are on different subnets, and in Windows Server 2016 between nodes in different fault domain sites.

It is important to understand that both the delay and threshold have a cumulative effect on the total health detection. For example setting CrossSubnetDelay to send a heartbeat every 2 seconds and setting the CrossSubnetThreshold to 10 heartbeats missed before taking recovery, means that the cluster can have a total network tolerance of 20 seconds before recovery action is taken.

In general, continuing to send frequent heartbeats but having greater thresholds is the preferred method. The primary scenario for increasing the Delay, is if there are ingress / egress charges for data sent between nodes. The table below lists properties to tune cluster heartbeats along with default and maximum values.

| Parameter | Win2012 R2 | Win2016 | Maximum |

| SameSubnetDelay | 1 second | 1 second | 2 seconds |

| SameSubnetThreshold | 5 heartbeats | 10 heartbeats | 120 heartbeats |

| CrossSubnetDelay | 1 second | 1 seconds | 4 seconds |

| CrossSubnetThreshold | 5 heartbeats | 20 heartbeats | 120 heartbeats |

| CrossSiteDelay | NA | 1 second | 4 seconds |

| CrossSiteThreshold | NA | 20 heartbeats | 120 heartbeats |

To be more tolerant of transient failures it is recommended on Win2008 / Win2008 R2 / Win2012 / Win2012 R2 to increase the SameSubnetThreshold and CrossSubnetThreshold values to the higher Win2016 values.

If the Hyper-V role is installed on a Windows Server 2012 R2 Failover Cluster, the SameSubnetThreshold default will automatically be increased to 10 and the CrossSubnetThreshold default will automatically be increased to 20. After installing the hotfix (KB3153887) the default heartbeat values will be increased on Windows Server 2012 R2 to the Windows Server 2016 values

Note:

- The samesubnedelay and samesubnetthreashold values are very specific to heartbeat settings between cluster nodes/hosts. These changes will help delay the heartbeat checks between the nodes/hosts.

- The above changes will not control the way the SMB multi-channel that is used by the Cluster Shared Volumes (CSV). The moment the TCP connection is dropped during the network maintenance activity, the SMB channel will have an impact.

- As the SMB connection drops, it will affect the VMs hosted on the CSV volumes. They will not be able to get the metadata for the CSV over the SMB channel.

- Due to problems over CSV network (SMB channel), you would see event ID 5120 for CSV volumes. This will impact the VM availability.

So, based on the above points if there is a network outage beyond 10-20 seconds, there will be impact to the cluster and there is no way to avoid this impact on the VM resources. It is recommended to ensure the VMs are moved to the nodes where will be no network impact or to be brought offline gracefully before the network maintenance activity. Make sure that you should not change the heartbeat values more than 20 secs

References

Network Recommendations for a Hyper-V Cluster in Windows Server 2012

From <https://technet.microsoft.com/en-us/library/dn550728(v=ws.11).aspx>

Configuring Windows Failover Cluster Networks -Excellent

Tuning Failover Cluster Network Thresholds -Good

From <https://blogs.msdn.microsoft.com/clustering/2012/11/21/tuning-failover-cluster-network-thresholds/>